A groundbreaking investigation has unveiled a profound parallelism between the intricate mechanisms by which the human brain deciphers spoken language and the sophisticated architectures underpinning contemporary artificial intelligence models, particularly those designed for natural language processing. This research, conducted by a collaborative team of neuroscientists and AI specialists, posits that the brain’s comprehension of speech unfolds not as an instantaneous event, but rather as a sequential, hierarchical progression of processing stages, remarkably mirroring the layered approach employed by advanced AI systems. By meticulously charting neural activity in individuals as they engaged with auditory narratives, the scientists observed a striking congruence between the temporal dynamics of brain responses and the depth of processing within AI language models. This alignment was particularly pronounced in well-established cortical regions critical for language functions, such as Broca’s area, suggesting a convergence of computational principles across biological and artificial intelligences.

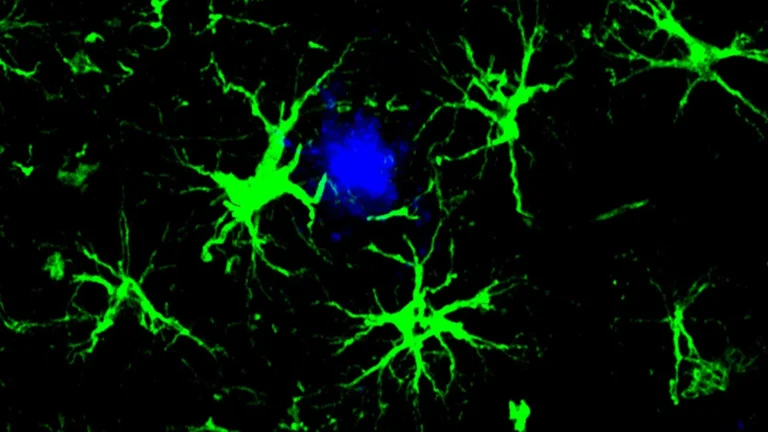

The findings, meticulously documented and published in the esteemed journal Nature Communications, emerge from the collaborative efforts of Dr. Ariel Goldstein, affiliated with the Hebrew University, alongside Dr. Mariano Schain from Google Research and Professors Uri Hasson and Eric Ham of Princeton University. Their collective endeavor has illuminated an unexpected resonance between human cognitive processes and the operational frameworks of AI, challenging deeply entrenched paradigms of linguistic understanding. The research utilized electrocorticography (ECoG), a neurophysiological technique involving the placement of electrodes directly onto the surface of the brain, to capture high-resolution temporal and spatial data of neural activity. Participants were exposed to a continuous thirty-minute podcast, allowing researchers to observe the brain’s real-time engagement with the flow of spoken information.

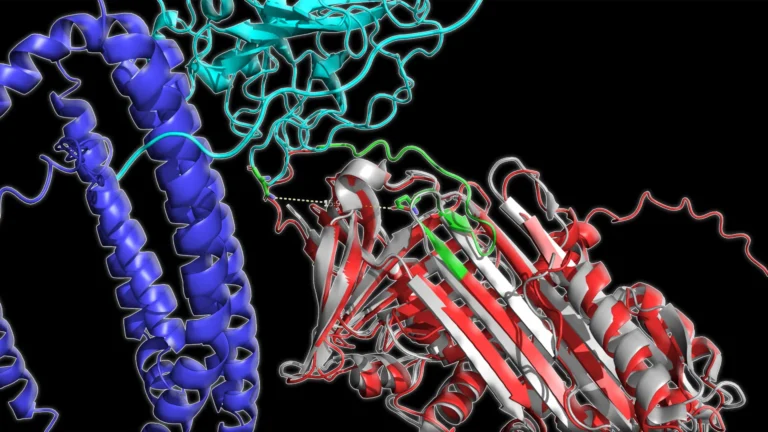

Central to the study’s revelations is the concept that meaning construction in the brain is a temporal, step-by-step endeavor. Rather than grasping the entirety of a sentence’s import at the initial reception of each word, the neural system engages in a series of transformations, progressively refining and contextualizing information. The research team demonstrated that these sequential neural events in the human brain exhibit a temporal progression that closely emulates the architectural layering found in state-of-the-art large language models (LLMs), such as the well-known GPT-2 and Llama 2. In these AI systems, initial layers typically analyze fundamental linguistic units and phonetic patterns, while subsequent, deeper layers integrate contextual cues, semantic relationships, and nuanced interpretations to achieve comprehensive understanding.

The study’s analysis revealed a compelling parallel: early neural signals observed in human listeners corresponded to the initial processing stages within AI models, focusing on more rudimentary aspects of the incoming speech. As the brain continued to process the narrative, later neural responses showed a marked alignment with the deeper, more abstract processing layers of the AI. This temporal correspondence was especially evident in brain regions traditionally associated with advanced language processing, including Broca’s area. In these regions, neural activity that correlated with the deeper AI layers tended to manifest at a later point in time, underscoring the brain’s progressive, hierarchical approach to meaning extraction.

Dr. Goldstein articulated his astonishment at the findings, stating, "What surprised us most was how closely the brain’s temporal unfolding of meaning matches the sequence of transformations inside large language models. Even though these systems are built very differently, both seem to converge on a similar step-by-step buildup toward understanding." This observation highlights a potential convergence of computational strategies, suggesting that evolution and artificial design, despite their disparate origins, may arrive at similar, efficient solutions for complex information processing tasks.

The implications of these findings extend significantly beyond the realm of theoretical neuroscience, offering profound insights into the capabilities of artificial intelligence and its potential to serve as a powerful tool for understanding the human mind. For decades, dominant theories of language comprehension often emphasized a rule-based, symbolic approach, positing that meaning is derived from the manipulation of fixed linguistic units and rigid hierarchical structures. The results of this study, however, cast doubt on the sole sufficiency of such models, instead advocating for a more dynamic, statistically driven process where meaning emerges fluidly through the continuous integration of context. This perspective aligns more closely with the probabilistic nature of LLMs, which learn patterns and relationships from vast amounts of data.

Furthermore, the researchers rigorously examined the predictive power of traditional linguistic features, such as phonemes (the smallest units of sound) and morphemes (the smallest units of meaning). Their analysis indicated that these classic linguistic constructs, while foundational, were less adept at explaining the real-time neural activity associated with language comprehension compared to the contextualized representations generated by the AI models. This empirical evidence lends strong support to the notion that the human brain prioritizes a continuous, context-dependent interpretation of language over a rigid adherence to discrete, pre-defined linguistic building blocks. This suggests that the brain is highly adept at leveraging the surrounding linguistic environment to disambiguate meaning and facilitate comprehension.

In a significant move to accelerate future research in this burgeoning field, the investigative team has generously made their complete repository of neural recordings and associated linguistic features publicly accessible. This open-access dataset represents a valuable new resource for the global scientific community, empowering researchers worldwide to critically evaluate competing theories of language understanding. It also provides a robust foundation for the development of novel computational models that more accurately and comprehensively reflect the intricate workings of the human mind. By facilitating collaborative inquiry and empirical validation, this shared resource promises to expedite our journey toward a deeper comprehension of the biological underpinnings of language and cognition, potentially paving the way for advancements in fields ranging from education and artificial intelligence to clinical neurology and the treatment of language-related disorders. The study signifies a pivotal moment in interdisciplinary research, bridging the gap between the complexities of the human brain and the ever-evolving capabilities of artificial intelligence.