The burgeoning capabilities of artificial intelligence are ushering in a transformative era across numerous sectors, and healthcare, particularly medical imaging, stands at the forefront of this revolution. However, a recent investigation published on March 24 in Radiology, the esteemed journal of the Radiological Society of North America (RSNA), has unveiled a disconcerting facet of this technological advancement: the alarming proficiency of AI in fabricating medical X-rays that are virtually indistinguishable from genuine scans, even to the most seasoned human experts and sophisticated AI models. This revelation underscores a profound vulnerability in the diagnostic process and signals an urgent imperative for robust safeguards and enhanced training to preserve the foundational reliability of medical imaging.

At the heart of this emerging challenge lies the concept of a "deepfake"—a term that has gained notoriety in the realm of misinformation but is now demonstrating its insidious potential within clinical contexts. Broadly defined, a deepfake refers to any video, photograph, audio file, or image that has been synthetically generated or deceptively altered using artificial intelligence to appear authentically original. In the medical domain, this translates to AI algorithms crafting radiological images that mimic real patient X-rays with such fidelity that they confound both human perception and algorithmic analysis, blurring the lines between actual pathology and digital artifice.

Dr. Mickael Tordjman, a postdoctoral fellow at the Icahn School of Medicine at Mount Sinai, New York, and the lead author of the groundbreaking study, articulated the gravity of these findings. "Our research conclusively demonstrates that these synthetic X-rays possess a level of realism sufficient to deceive radiologists, who are the foremost specialists in interpreting medical imagery," Dr. Tordjman noted. This deception persisted even when the specialists were explicitly made aware of the potential presence of AI-generated images within their review sets. The implications of such sophisticated forgery extend far beyond mere academic curiosity, posing significant risks ranging from fraudulent legal claims, where a fabricated injury could become undeniable evidence, to critical cybersecurity threats. Malicious actors could potentially infiltrate hospital networks, injecting spurious images to manipulate patient diagnoses, or orchestrate widespread systemic disruption by eroding the fundamental trustworthiness of digital medical records.

The investigative framework for this critical research was meticulously designed, involving a diverse cohort of 17 radiologists. These specialists hailed from 12 distinct institutions spanning six countries—the United States, France, Germany, Turkey, the United Kingdom, and the United Arab Emirates—bringing a wide spectrum of experience, from nascent practitioners to veterans with four decades of clinical practice. The study meticulously examined a total of 264 X-ray images, ensuring an equitable distribution between authentic patient scans and those meticulously generated by AI algorithms.

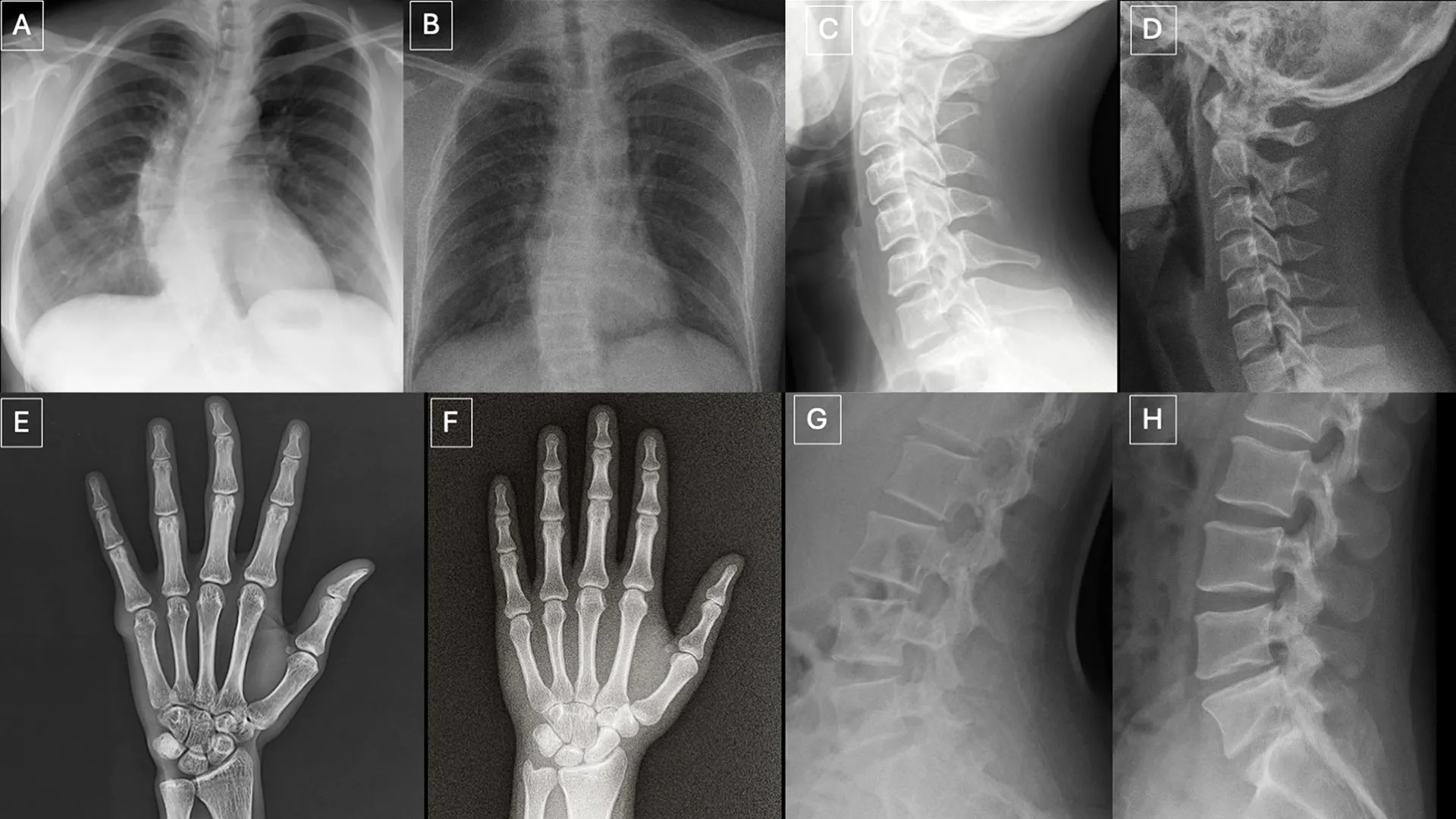

To thoroughly assess detection capabilities, participants were presented with two distinct image cohorts, designed to avoid any overlap or pre-biasing. The initial collection comprised an intricate mixture of authentic X-rays and deepfake images produced by ChatGPT, covering various anatomical regions of the human body. The subsequent set focused specifically on chest X-rays, equally divided between genuine scans and synthetic counterparts generated by RoentGen, an advanced open-source generative AI diffusion model meticulously developed by researchers at Stanford Medicine. This dual approach allowed for a comprehensive evaluation across different generative AI platforms and anatomical focus areas.

The results pertaining to detection accuracy painted a sobering picture. Initially, when radiologists were unaware that the image sets contained synthetic content, their ability to correctly identify AI-generated X-rays based solely on technical quality assessment plummeted to a mere 41%. This figure starkly highlights the profound challenge posed by the realism of these deepfakes. However, upon being informed of the deliberate inclusion of synthetic images, the average accuracy rate among the radiologists significantly improved, rising to a still-challenging 75%. This improvement suggests that while awareness aids detection, it does not guarantee perfect discernment, leaving a considerable margin for error.

Individual performance within the human cohort varied considerably, underscoring the subjective nature of visual interpretation even among highly trained professionals. When presented with ChatGPT-generated images, radiologists’ correct identification rates ranged from a concerning 58% to an encouraging 92%. Intriguingly, AI systems designed for multimodal language processing exhibited similar limitations in their detection capabilities. Four prominent multimodal large language models—GPT-4o and GPT-5 from OpenAI, Gemini 2.5 Pro by Google, and Llama 4 Maverick from Meta—were tasked with the same detection challenge. Their accuracy rates spanned a range from 57% to 85%. Notably, even ChatGPT-4o, which itself was instrumental in creating some of the deepfake images under scrutiny, failed to detect all of them, although its performance generally surpassed that of the other tested models. For the RoentGen-generated chest X-rays, radiologists achieved accuracy rates between 62% and 78%, while the AI models demonstrated detection capabilities ranging from 52% to 89%. This variability across both human and artificial intelligence platforms underscores the pervasive difficulty of the task.

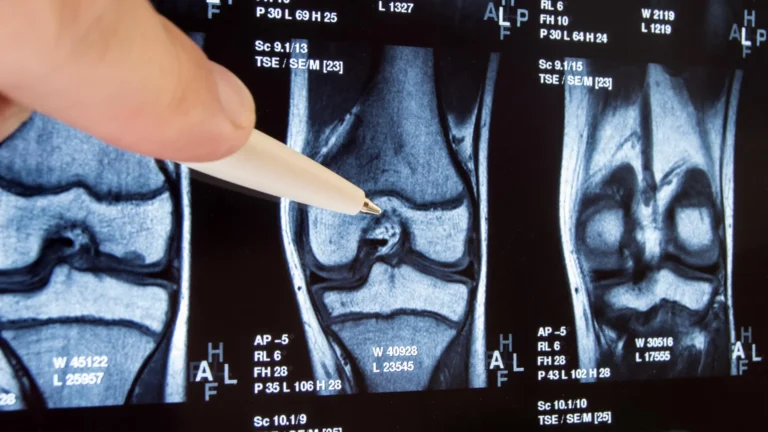

A particularly striking finding of the study was the absence of a discernible correlation between a radiologist’s years of professional experience and their proficiency in identifying synthetic X-rays. This challenges the conventional wisdom that greater experience invariably leads to superior diagnostic acumen, suggesting that the visual cues of deepfakes may bypass traditional diagnostic training. However, the study did reveal an interesting subspecialty distinction: musculoskeletal radiologists exhibited a significantly higher accuracy in detection compared to their counterparts in other subspecialties. This might be attributable to their focused expertise in bone and joint structures, allowing them to more readily spot the subtle, yet artificial, perfections inherent in deepfake renderings of these specific anatomical areas.

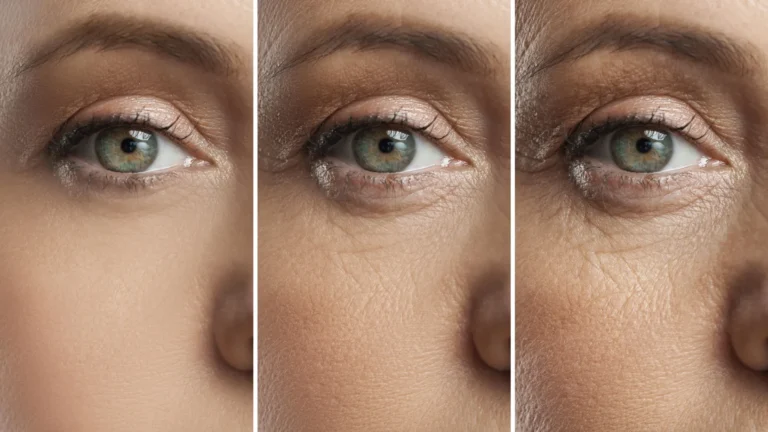

The research team meticulously cataloged several recurring visual patterns that served as potential "tells" within the synthetic images. As Dr. Tordjman elucidated, "Deepfake medical images frequently present an appearance that is ‘too perfect’." This uncanny perfection manifests in several ways: bones often appear unnaturally smooth, spines are rendered with an atypical straightness, lung fields might exhibit an exaggerated symmetry, and the intricate patterns of blood vessels could appear excessively uniform. Furthermore, fractures, when present, often possess an unusual cleanliness and consistency, frequently confined to a single side of the bone, lacking the subtle complexities and surrounding tissue reactions typical of real-world trauma. These visual anomalies, while individually subtle, collectively contribute to an overall impression of artificiality that highly attuned observers might eventually learn to recognize.

The profound implications of deepfake X-rays extend across multiple critical domains. The potential for their misuse in legal contexts is substantial; fabricated images could be introduced as evidence in personal injury claims or medical malpractice suits, leading to unjust outcomes and eroding public trust in the legal system’s ability to discern truth. More alarmingly, the insertion of synthetic images into hospital information systems presents a grave cybersecurity threat. Beyond data breaches, this form of data corruption could lead to widespread misdiagnoses, inappropriate treatments, and a complete breakdown of patient care protocols, thereby disrupting healthcare operations on a massive scale. Such scenarios highlight a shift from data theft to data integrity attacks, where the goal is to undermine the very foundation of medical decision-making.

To proactively mitigate these formidable threats, the researchers strongly advocate for the implementation of robust digital protection mechanisms. Among the proposed solutions are invisible watermarks, which could be imperceptibly embedded directly into images at the point of capture. These watermarks would carry verifiable metadata, allowing for a retrospective check of an image’s origin and authenticity. Complementary to this, cryptographic signatures linked to the imaging technologist at the time of image acquisition could provide an immutable chain of custody, ensuring the integrity and provenance of each digital medical file. These technological interventions represent a crucial first line of defense against the proliferation and misuse of deepfake medical imagery.

Looking ahead, Dr. Tordjman ominously suggested that the current findings might represent merely "the tip of the iceberg." He emphasized that the logical progression of this AI capability would undoubtedly lead to the generation of synthetic 3D images, encompassing modalities such as computed tomography (CT) and magnetic resonance imaging (MRI). The complexity and data richness of these 3D scans would present an even greater challenge for detection, amplifying the risks manifold. Consequently, the establishment of comprehensive educational datasets and sophisticated detection tools now is not merely beneficial but critically imperative to prepare the healthcare ecosystem for this impending wave of advanced deepfakes. To support these proactive measures, the research team has commendably released a curated dataset of deepfake images, accompanied by interactive quizzes, specifically designed for training and educational purposes. This initiative represents a vital step in fostering awareness and equipping medical professionals with the necessary skills to navigate the increasingly complex landscape of AI-generated medical imagery, safeguarding patient care and upholding the integrity of diagnostic medicine in the digital age.