A groundbreaking investigation into the intricate mechanisms of human auditory comprehension has unveiled a profound and unanticipated correspondence with the operational paradigms of advanced artificial intelligence systems. Researchers meticulously charted neural activity in individuals engaging with spoken narratives, a process that revealed a progressive evolution of brain responses mirroring the layered architectures characteristic of sophisticated AI language models. This revelation challenges deeply entrenched, rule-based conceptualizations of how humans decipher spoken words, finding crucial validation in a recently disseminated public repository of data, which promises to revolutionize the scientific exploration of meaning construction within the human cerebrum.

The seminal research, formally documented and disseminated within the esteemed journal Nature Communications, was spearheaded by Dr. Ariel Goldstein of the Hebrew University, in collaboration with Dr. Mariano Schain from Google Research and Professors Uri Hasson and Eric Ham, both affiliated with Princeton University. Collectively, this interdisciplinary cohort unearthed a remarkable confluence between the human brain’s capacity to assimilate spoken discourse and the sophisticated methods employed by contemporary AI constructs to process textual information.

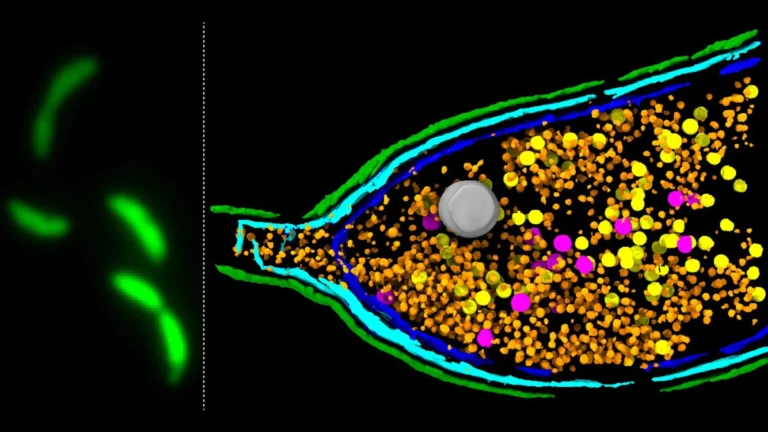

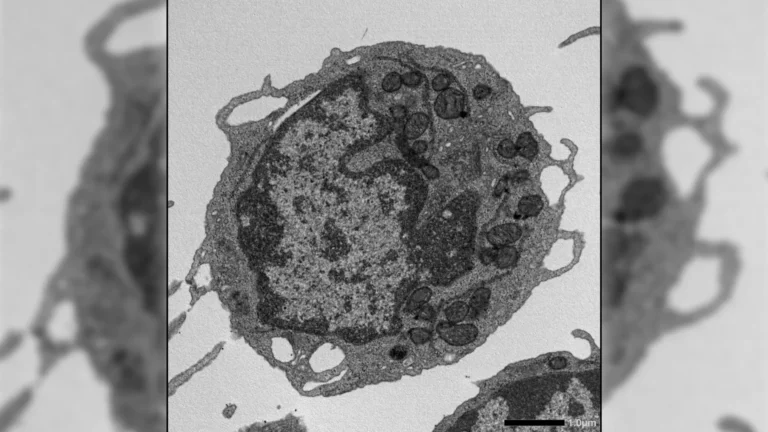

Employing electrocorticography (ECoG), a highly precise neurophysiological technique that involves recording electrical activity directly from the surface of the brain, the scientists meticulously monitored the temporal and spatial dynamics of neural engagement as participants listened to a thirty-minute podcast. Their findings delineated a structured, sequential progression in the brain’s handling of linguistic input, a sequence that bears an uncanny resemblance to the segmented, hierarchical design underpinning prevalent large language models, such as the widely recognized GPT-2 and Llama 2.

The conventional understanding of human speech comprehension often posits an immediate, holistic grasp of meaning. However, this recent study posits a more nuanced perspective, suggesting that the brain constructs understanding through a series of discrete neural transformations, unfolding sequentially over time. Dr. Goldstein and his associates demonstrated that these temporal stages of neural processing align precisely with the architectural layers of AI language models. In these AI systems, initial layers typically process rudimentary linguistic features and word properties, while subsequent, deeper layers integrate contextual nuances, prosodic elements, and overarching semantic coherence.

The observed patterns of human brain activity mirrored this layered AI processing model with remarkable fidelity. Early neural signals, indicative of initial sensory processing and basic linguistic decoding, corresponded to the initial stages of AI interpretation. As the auditory input progressed, later brain responses, reflecting a more sophisticated integration of information and contextual understanding, aligned with the operations of the deeper layers within the AI models. This temporal synchronicity was particularly pronounced in established higher-order language processing regions of the brain, such as Broca’s area, a region historically associated with language production and comprehension. Within these areas, neural activity exhibited a delayed peak response when it was correlated with the deeper, more abstract processing layers of the AI.

Dr. Goldstein articulated the profound impact of these findings, stating, "The most astonishing aspect of our investigation was the degree to which the brain’s temporal unfolding of semantic acquisition closely recapitulates the sequence of transformations observed within large language models. Despite their fundamentally disparate underlying architectures, both biological and artificial systems appear to converge upon a similar, step-by-step methodology for achieving comprehension." This convergence suggests that the fundamental computational principles underlying meaning extraction might transcend the specific substrate on which they are implemented.

The implications of this research extend far beyond a mere acknowledgment of AI’s generative capabilities; they offer a potent new lens through which to scrutinize and potentially elucidate the intricate processes of meaning formation within the human mind. For decades, linguistic theory has predominantly favored paradigms that emphasize fixed symbolic representations and rigid hierarchical structures as the bedrock of language comprehension. The results presented by Goldstein’s team offer a compelling challenge to this long-standing orthodoxy, instead advocating for a more dynamic and statistically driven model. In this revised framework, meaning is not a static entity but rather an emergent property that gradually solidifies through the continuous interplay of context and evolving neural representations.

Furthermore, the researchers subjected traditional linguistic units, such as phonemes (the smallest distinctive sound units of a language) and morphemes (the smallest meaningful units of a language), to rigorous empirical testing. These foundational linguistic elements, while crucial to language structure, proved less adept at explaining the real-time neural activity observed during listening compared to the contextually rich representations generated by the AI models. This empirical finding lends substantial support to the hypothesis that the human brain prioritizes the continuous flow of contextual information over the rigid adherence to discrete, predefined linguistic building blocks when constructing meaning.

In a significant move to accelerate future research in this nascent field, the research consortium has democratized access to their comprehensive dataset. This includes the complete collection of neural recordings obtained from participants and a detailed catalog of the linguistic features that were analyzed. By making this rich resource publicly available, the team aims to empower researchers globally to rigorously test competing theories of language understanding and to foster the development of computational models that more accurately emulate the functional architecture of the human cognitive apparatus for language. This open science initiative is expected to catalyze new avenues of inquiry and foster collaborative breakthroughs in our understanding of both biological and artificial intelligence.