A recent investigation into the intricate interplay between our senses has yielded surprising insights, suggesting that the widely adopted practice of closing one’s eyes to better discern faint sounds may, in fact, be counterproductive, particularly when ambient noise levels are high. This revelation stems from a comprehensive study conducted by researchers at Shanghai Jiao Tong University, which systematically explored the impact of visual input on auditory perception, publishing their findings on behalf of the Acoustical Society of America via AIP Publishing in the journal JASA. The conventional wisdom posits that by eliminating visual distractions, the brain can reallocate its attentional resources more effectively towards auditory processing, thereby amplifying sensitivity to subtle sonic cues. However, this intuitive approach appears to falter when faced with the complexities of a cacophonous environment.

The experimental design meticulously assessed participants’ ability to detect and differentiate sounds under varying auditory and visual conditions. Participants were tasked with listening to a series of auditory stimuli presented through headphones, all while being subjected to a continuous stream of background noise. Their objective was to meticulously adjust the volume of the target sound until it was perceived at the absolute threshold of audibility, meaning it was just barely distinguishable from the surrounding din. This fundamental task served as the bedrock upon which different visual modalities were layered.

To thoroughly examine the influence of sight, the experiment incorporated a progression of visual conditions. Initially, participants performed the auditory detection task with their eyes deliberately closed, an act often instinctively performed when striving for enhanced hearing. Following this baseline, they were instructed to repeat the same task with their eyes open, but directed towards a neutral, blank screen. The subsequent phase introduced a static visual element: participants viewed a still image that was thematically related to the sound they were meant to detect. Finally, the most immersive visual condition involved participants watching a dynamic video clip that was synchronized with and matched the auditory content they were experiencing.

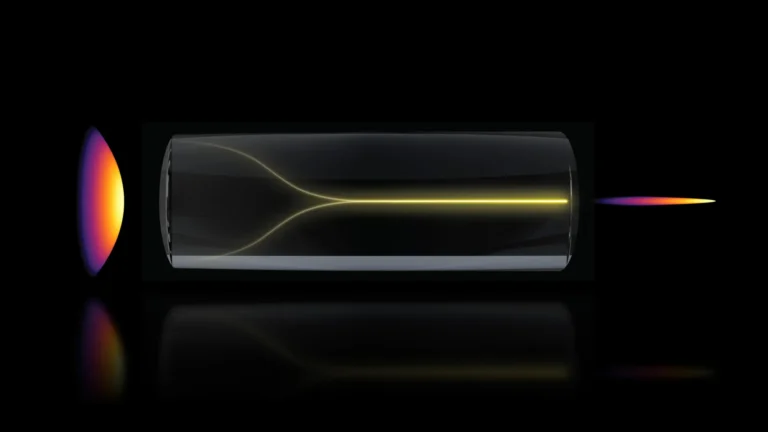

The empirical outcomes presented a significant divergence from deeply ingrained assumptions about sensory perception. Contrary to the prevalent belief that visual deprivation aids auditory focus, the study’s lead author, Yu Huang, stated that "closing one’s eyes actually impairs the ability to detect these sounds." This finding directly contradicts the notion that removing visual input universally benefits auditory processing. Furthermore, the research illuminated the substantial advantage conferred by relevant visual information, with the observation that "seeing a dynamic video corresponding to the sound significantly improves hearing sensitivity." Thus, rather than offering an advantage, the act of closing one’s eyes appeared to hinder the precise identification of faint sounds amidst auditory clutter. Conversely, the provision of congruent visual cues offered a distinct and measurable enhancement to auditory perception.

To delve into the underlying neural mechanisms responsible for these observed effects, the research team employed electroencephalography (EEG), a non-invasive neuroimaging technique that measures electrical activity in the brain. By monitoring brainwave patterns during the various experimental conditions, researchers gained critical insights into how visual input influences auditory processing at a neurological level. Their EEG data revealed that when individuals close their eyes, their brains tend to shift into a state characterized by heightened neural criticality. This state is associated with a more robust filtering of incoming sensory information.

However, this enhanced filtering mechanism is not a precisely targeted tool. While it may reduce the impact of irrelevant background noise, it can also inadvertently suppress the very auditory signals that participants are attempting to isolate and perceive. Huang elaborated on this phenomenon, explaining that "in a noisy soundscape, the brain needs to actively separate the signal from the background." The internal focus cultivated by eye closure, he noted, can paradoxically "work against you in this context, leading to over-filtering." In contrast, active visual engagement appears to serve as an external anchor, helping to orient and stabilize the auditory system within the context of the real world. This multisensory integration, where sight and sound work in concert, appears to be far more effective than relying solely on one sense while attempting to suppress another.

The researchers also acknowledged that the observed effect of eye closure on auditory perception is context-dependent, appearing to be most pronounced in environments with significant ambient noise. In conditions where the auditory environment is relatively quiet and free from distractions, the act of closing one’s eyes might indeed still prove beneficial for detecting exceptionally subtle sounds. This nuanced finding suggests that the benefits of sensory suppression are not absolute but are modulated by the prevailing environmental conditions.

Nevertheless, considering the pervasive nature of background noise in contemporary daily life – from bustling city streets to busy offices and social gatherings – maintaining an open visual field emerges as a potentially more advantageous strategy for many individuals striving to navigate and comprehend their auditory surroundings effectively. The everyday reality often presents a complex auditory tapestry, and in such scenarios, visual cues can play a crucial role in disambiguating and enhancing auditory information.

Looking ahead, the research team intends to further probe the intricate connections between the visual and auditory modalities. A key area of future investigation will be to precisely delineate whether the observed benefits of visual input are attributable to the mere presence of visual information or, more specifically, to the congruence between visual and auditory stimuli.

Huang articulated the specific direction of their future research: "Specifically, we want to test incongruent pairings — for example, what happens if you hear a drum but see a bird?" This line of inquiry aims to disentangle the general attentional benefits derived from processing visual information from the more profound advantages offered by true multisensory integration. By presenting mismatched sensory inputs, the researchers hope to clarify whether the brain requires a harmonious convergence of sight and sound to achieve maximal perceptual enhancement, or if simply having one’s eyes open and actively processing visual data provides a sufficient boost to auditory processing. Understanding this distinction is paramount to fully appreciating the sophisticated mechanisms by which our senses collaborate to construct our perception of the world.