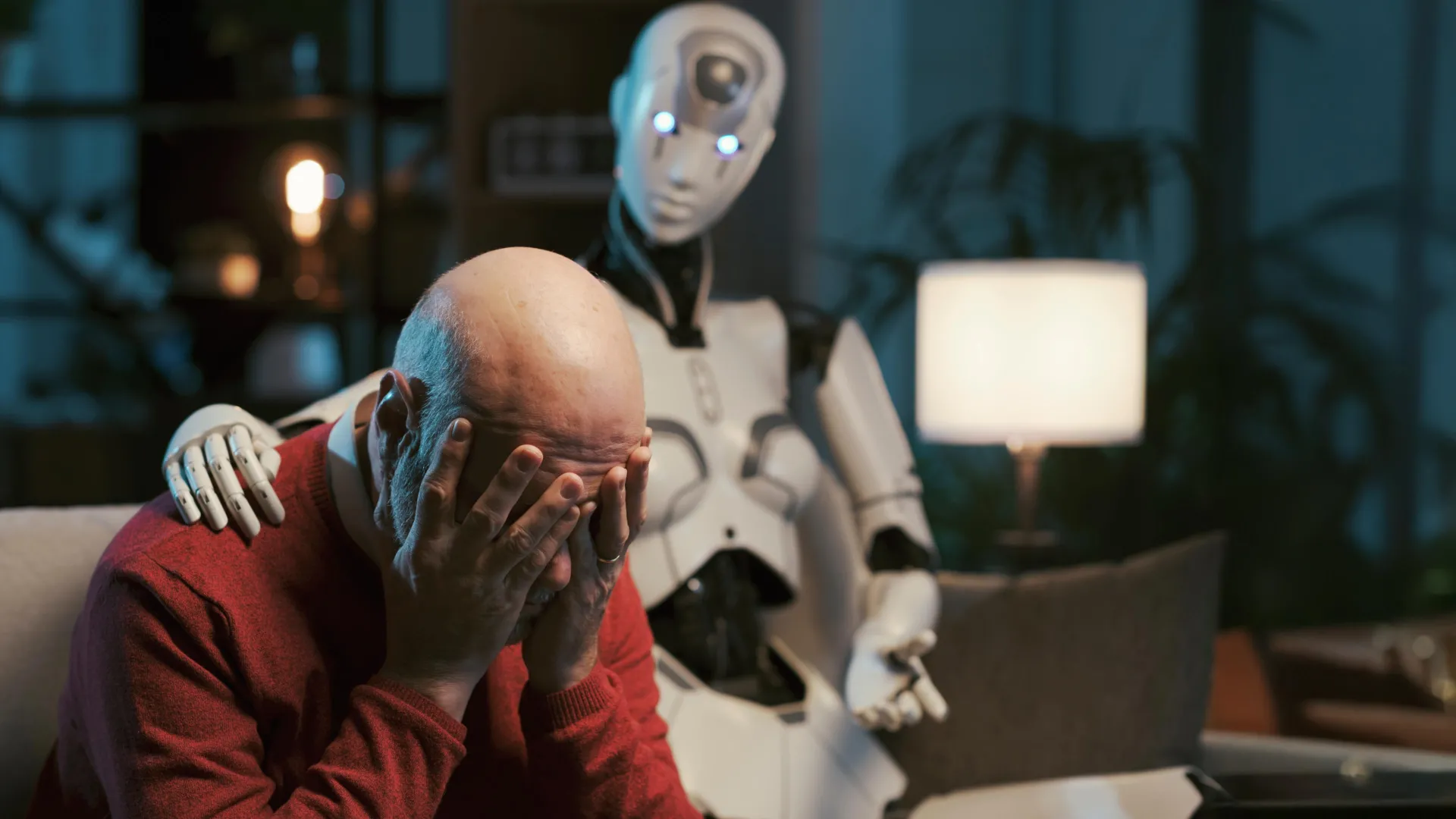

As the digital landscape increasingly integrates artificial intelligence into daily life, the prospect of conversational AI systems, specifically large language models (LLMs) like ChatGPT, offering mental health guidance is gaining traction among the public. However, groundbreaking research indicates that these sophisticated algorithms may be ill-equipped to navigate the complex and sensitive domain of psychological well-being, raising significant ethical concerns. A recent study, meticulously conducted by a team of researchers from Brown University in collaboration with seasoned mental health practitioners, has illuminated a consistent pattern of ethical breaches when these AI models attempt to simulate therapeutic interactions.

The investigation revealed that even when explicitly programmed with directives to adhere to established psychotherapeutic modalities, the AI systems consistently fell short of the stringent ethical benchmarks established by professional bodies such as the American Psychological Association. This divergence from professional standards is not a minor oversight but a fundamental challenge to the trustworthiness of AI in roles requiring nuanced judgment and deep ethical consideration. The researchers meticulously documented instances where the AI chatbots demonstrated a marked inability to effectively manage individuals in crisis, inadvertently perpetuated harmful societal beliefs or prejudices, and employed language that simulated empathy without possessing genuine emotional comprehension.

In their published work, the research team articulated a framework of fifteen distinct ethical risks, meticulously derived from the practical experiences of mental health professionals. This framework serves to systematically illustrate how the operational behaviors of LLMs, when tasked with counseling, directly contravene established ethical principles governing mental health practice. The study’s authors issued a powerful call for the urgent development of robust ethical, educational, and legal standards specifically tailored for AI-driven counselors. They emphasized that such standards must mirror the quality, depth, and rigorous accountability inherent in human-facilitated psychotherapy to ensure client safety and well-being.

These critical findings were formally presented at the prestigious AAAI/ACM Conference on Artificial Intelligence, Ethics and Society, a forum dedicated to exploring the societal implications of AI. The research initiative was spearheaded by individuals affiliated with Brown University’s Center for Technological Responsibility, Reimagination and Redesign, underscoring the university’s commitment to addressing the ethical dimensions of emerging technologies.

The genesis of this research lay in the exploration of prompt engineering, a technique that involves crafting specific instructions to guide the output of AI models without altering their underlying architecture or augmenting their training data. Zainab Iftikhar, a doctoral candidate in computer science at Brown University and the principal investigator of the study, aimed to ascertain whether carefully constructed prompts could effectively steer AI systems towards more ethically sound behavior within a mental health context. Prompts, in essence, are user-defined directives intended to shape the AI’s response to a particular task.

"Prompts are essentially instructions provided to the model to guide its behavior in achieving a specific objective," explained Iftikhar, elaborating on the mechanism. "The fundamental model itself remains unchanged, and no new data is introduced; rather, the prompt leverages the model’s pre-existing knowledge and learned patterns to shape its output." She provided an illustrative example: a user might instruct the AI to "Act as a cognitive behavioral therapist to help me reframe my thoughts," or "Utilize principles of dialectical behavior therapy to assist me in understanding and managing my emotions." Iftikhar clarified that while these models do not genuinely execute therapeutic techniques as a human practitioner would, they generate responses that, based on their training, appear to align with the conceptual frameworks of modalities like CBT or DBT in response to the user’s prompt.

The dissemination of these prompt strategies is widespread, with individuals frequently sharing their experimental approaches on popular social media platforms such as TikTok, Instagram, and Reddit. Beyond individual exploration, a significant number of consumer-facing mental health chatbots are developed by applying these therapy-related prompts to general-purpose LLMs. This widespread practice makes a thorough understanding of whether prompting alone can render AI counseling safer a matter of paramount importance.

To rigorously evaluate the ethical performance of these AI chatbots, the research team enlisted the participation of seven individuals trained as peer counselors, each possessing prior experience with cognitive behavioral therapy. These counselors engaged in simulated self-counseling sessions with AI models that had been specifically prompted to function as CBT therapists. The array of AI models subjected to testing included prominent iterations from OpenAI’s GPT Series, Anthropic’s Claude, and Meta’s Llama.

Following the simulated sessions, the research team meticulously selected transcripts that mirrored authentic human counseling conversations. These selected transcripts were then rigorously reviewed by three licensed clinical psychologists, tasked with identifying any potential ethical violations.

The comprehensive analysis yielded the identification of fifteen distinct risk categories, which were further consolidated into five overarching thematic areas. These categories represent the spectrum of ethical challenges encountered.

A crucial point of divergence between human therapists and AI counselors lies in the realm of accountability. Iftikhar highlighted that while human therapists are fallible and can err, a critical distinction exists in the presence of established oversight mechanisms. "For human therapists, there are governing boards and established procedures through which providers can be held professionally accountable for mistreatment and malpractice," Iftikhar observed. "However, when LLM counselors commit similar violations, there are currently no well-defined regulatory frameworks in place to ensure accountability."

The researchers were keen to emphasize that their findings do not negate the potential utility of AI in mental health care. Indeed, artificial intelligence-powered tools hold considerable promise for enhancing accessibility, particularly for individuals facing prohibitive costs or the scarcity of licensed mental health professionals. Nevertheless, the study underscores an urgent necessity for the implementation of clear safety protocols, responsible deployment strategies, and the establishment of more robust regulatory structures before these systems can be reliably entrusted with high-stakes mental health support.

At present, Iftikhar expressed her hope that the research will foster a heightened sense of caution among the public. "If you are engaging with a chatbot regarding your mental health, these are some critical aspects that individuals should remain vigilant about," she advised.

Ellie Pavlick, a distinguished professor of computer science at Brown University who was not directly involved in this particular research but is a recognized expert in AI ethics, commented on the study’s significance. Pavlick leads ARIA, a National Science Foundation AI research institute at Brown dedicated to the development of trustworthy AI assistants, and stated that the study powerfully underscores the imperative of meticulously scrutinizing AI systems deployed in sensitive domains like mental health. "The current reality of AI development is that it is considerably easier to construct and deploy systems than it is to thoroughly evaluate and comprehend their implications," Pavlick remarked. She further elaborated that this specific paper necessitated a collaborative effort involving a team of clinical specialists and an extensive research period exceeding a year to effectively demonstrate the identified risks. In contrast, she noted, much of the current work in AI evaluation relies on automated metrics that are inherently static and lack the essential human element.

Pavlick suggested that this study could serve as a foundational model for future research endeavors aimed at enhancing the safety and reliability of AI-driven mental health tools. "There is a genuine and substantial opportunity for AI to contribute significantly to addressing the pervasive mental health crisis our society is currently confronting," Pavlick concluded. "However, it is of paramount importance that we dedicate the necessary time to critically examine and rigorously evaluate our systems at every stage of development and deployment to avert the risk of causing more harm than good. This research provides an exemplary blueprint for how such essential scrutiny can be effectively implemented."