A recent investigation into the interplay between our senses challenges a deeply ingrained assumption: that silencing visual input amplifies our capacity to hear faint sounds. For many, the instinct upon straining to discern a subtle auditory cue is to close their eyes, a maneuver intuitively believed to eliminate distractions and allow the brain to dedicate its full processing power to sound. This widely held notion, however, appears to be a miscalculation, particularly when confronted with the cacophony of everyday life, according to novel research.

Delving into the intricate neural mechanisms that govern our sensory experiences, a team of scientists from Shanghai Jiao Tong University embarked on a comprehensive study to empirically assess the validity of this common belief. Their findings, published in the esteemed journal JASA (the Journal of the Acoustical Society of America) by AIP Publishing, reveal a counterintuitive truth: actively suppressing visual stimuli can, in fact, be detrimental to auditory detection, especially within environments saturated with competing noises.

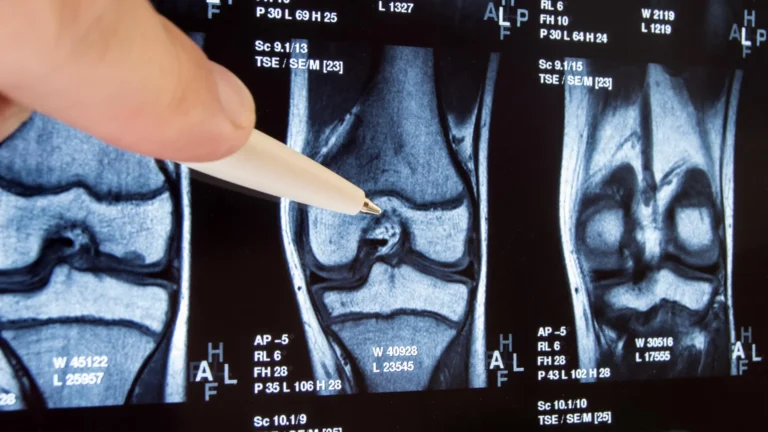

The experimental design meticulously recreated scenarios demanding keen auditory discrimination. Participants were outfitted with headphones and exposed to a spectrum of auditory stimuli against a backdrop of carefully calibrated ambient noise. Their primary objective was to meticulously adjust the intensity of the target sounds until they were just perceptible above the masking din. This task was executed across a series of distinct visual conditions, designed to isolate the impact of visual input on auditory acuity.

Initially, subjects performed the auditory detection task with their eyes closed, adhering to the conventional wisdom of minimizing visual interference. Subsequently, they repeated the same procedure with their eyes open, gazing at a nondescript blank screen. This was followed by a condition where participants observed a static image that bore a thematic relationship to the sound they were hearing. The final, and most revealing, phase involved participants watching a dynamic video synchronized with the auditory input.

The empirical results presented a stark departure from popular intuition. Contrary to the widespread belief that darkness enhances hearing, the research team discovered that "closing one’s eyes actually impairs the ability to detect these sounds," as articulated by lead author Yu Huang. This assertion was further substantiated by the observation that "seeing a dynamic video corresponding to the sound significantly improves hearing sensitivity." The implications are profound: rather than providing a conduit to superior auditory perception, the act of closing one’s eyes in a noisy setting appears to create an impediment, making it more challenging to isolate desired sounds. Conversely, the provision of relevant visual information confers a distinct and measurable advantage.

To unravel the underlying neurological processes responsible for these observed effects, the researchers employed electroencephalography (EEG), a non-invasive technique that monitors electrical activity in the brain. This allowed them to track the neural responses of participants during the various experimental conditions. The EEG data revealed that the act of closing one’s eyes initiates a shift in brain activity, orienting it toward a state characterized by heightened neural criticality. This heightened criticality, in turn, amplifies the brain’s propensity to filter incoming sensory information.

This amplified filtering mechanism, however, is not a precision instrument. It does not selectively attenuate only the extraneous background noise; instead, it can inadvertently suppress the very target sounds participants are striving to detect. Huang explained this phenomenon, stating, "In a noisy soundscape, the brain needs to actively separate the signal from the background." The internal focus engendered by eye closure, he elaborated, "actually works against you in this context, leading to over-filtering." In contrast, he noted, "visual engagement helps anchor the auditory system to the external world," providing a crucial external reference point.

The researchers are careful to qualify their findings, emphasizing that the detrimental effect of eye closure appears to be context-dependent. The study’s conclusions are primarily relevant to environments characterized by significant ambient noise. In situations where auditory conditions are relatively tranquil, the act of closing one’s eyes might indeed still offer a marginal benefit in detecting particularly subtle auditory cues. However, given that the vast majority of daily human experiences unfold against a backdrop of considerable auditory clutter, the practical takeaway for most individuals is that maintaining an open visual field is the more effective strategy for optimizing auditory perception.

Looking ahead, the research team is eager to further explore the intricate relationship between vision and audition. A key question that remains to be definitively answered is whether the observed benefits of visual input stem simply from the presence of any visual stimulation or if the congruence between visual and auditory information is the critical factor. "Specifically, we want to test incongruent pairings," Huang remarked, posing a hypothetical scenario: "what happens if you hear a drum but see a bird?" This line of inquiry aims to disentangle the general attentional boost derived from visual processing from the more specialized advantages conferred by multisensory integration, where corresponding sensory inputs work in concert. Understanding this distinction, the scientists believe, will be instrumental in elucidating the nuanced ways in which our senses collaborate to construct our perception of the world. The findings represent a significant step in demystifying how our brains manage complex sensory information, suggesting that our common assumptions about sensory trade-offs may require a fundamental re-evaluation.