The intricate human capacity for internal reflection, often perceived as a uniquely introspective trait, is now proving to be a potent catalyst for advancing artificial intelligence capabilities. While humans utilize self-dialogue to structure thoughts, evaluate options, and process emotions, a groundbreaking study reveals that a similar self-referential mechanism can significantly enhance machine learning and adaptive performance. Researchers at the Okinawa Institute of Science and Technology (OIST) have demonstrated, in findings published in the journal Neural Computation, that artificial intelligence systems exhibit superior proficiency across a broad spectrum of tasks when trained to incorporate an internal "speech" component alongside a robust short-term memory architecture. This research fundamentally shifts the understanding of AI development, suggesting that the efficacy of learning is not solely dictated by an AI’s underlying design, but critically by its internal feedback loops and self-interaction dynamics during the training phase.

Dr. Jeffrey Queiñáizer, a Staff Scientist within OIST’s Cognitive Neurorobotics Research Unit and lead author of the study, elaborated on the significance of these self-interactions. "This investigation underscores the profound influence of self-generated dialogue on the learning process," Dr. Queiñáizer stated. "By carefully curating training data to foster this internal conversational ability within our AI systems, we have empirically shown that learning outcomes are not merely a product of architectural configurations, but are profoundly shaped by the intricate dynamics of interaction that are intentionally embedded within our training methodologies." This perspective suggests a paradigm shift from viewing AI learning as a purely external input-output process to one that incorporates internal, emergent computational strategies.

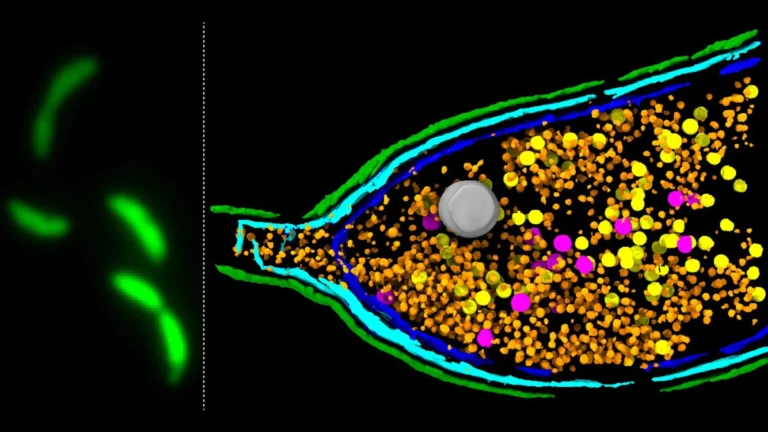

The experimental approach employed by the OIST team involved integrating a form of self-directed internal speech, conceptualized as a low-level "muttering" or simulated internal dialogue, with a sophisticated working memory system. This synergistic combination empowered their AI models to achieve greater learning efficiency, exhibit enhanced resilience when confronted with novel or unfamiliar scenarios, and effectively manage concurrent operational demands. The observed outcomes clearly indicated marked improvements in both the flexibility of the AI’s responses and its overall task execution capabilities, particularly when contrasted with AI systems that relied solely on conventional memory mechanisms. This suggests that the ability to "talk to itself" provides a crucial layer of processing that goes beyond mere data retention.

A central tenet driving the researchers’ work is the pursuit of "content-agnostic information processing." This concept refers to an AI’s ability to generalize learned competencies, applying them effectively in situations that deviate from those explicitly encountered during training. Instead of relying on rote memorization of specific examples, such systems are designed to derive and utilize overarching principles and rules, thereby fostering a more profound and adaptable form of intelligence.

"The seamless transition between tasks and the adept resolution of unforeseen challenges represent cognitive feats that humans accomplish with remarkable ease on a daily basis," Dr. Queiñáizer observed. "However, for artificial intelligence, these capabilities remain considerably more arduous to attain. This is precisely why we adopt an interdisciplinary methodology, drawing insights from developmental neuroscience and psychology, and merging them with advancements in machine learning and robotics, among other fields. Our aim is to forge novel conceptual frameworks for understanding learning and, in doing so, to illuminate the future trajectory of artificial intelligence." This multidisciplinary approach is vital for bridging the gap between biological cognition and artificial systems.

The genesis of the researchers’ inquiry lay in a deep examination of memory system design within AI models, with a particular emphasis on the functional role of working memory in facilitating generalization. Working memory, in essence, represents the transient capacity to retain and actively manipulate information, a faculty indispensable for tasks ranging from following complex instructions to performing rapid mental calculations. Through a series of carefully calibrated tasks, varying in their complexity, the team systematically evaluated the performance of different memory configurations.

Their findings indicated a direct correlation between the number of working memory slots—acting as temporary holding areas for discrete pieces of information—and performance on cognitively demanding problems. Tasks that required the simultaneous retention and ordered manipulation of multiple data points, such as reversing sequences or precisely recreating intricate patterns, yielded superior results in models equipped with more extensive working memory capacities. This highlights the importance of not just storing information, but also the ability to actively juggle and reorder it.

The research took a significant leap forward when the researchers introduced specific training targets designed to encourage the AI system to engage in self-dialogue a predetermined number of times. This deliberate instillation of internal communication led to a further enhancement in performance, with the most pronounced gains observed in scenarios involving multitasking and in tasks demanding a multi-step operational sequence. This suggests that the internal "rehearsal" or "contemplation" facilitated by self-talk directly contributes to more efficient and robust problem-solving.

"The synergistic nature of our combined system is particularly compelling, as it demonstrates the potential to operate effectively with sparse data inputs, a stark contrast to the vast datasets typically necessitated for training such models to achieve generalization," Dr. Queiñáizer remarked. "This approach offers a valuable, complementary, and computationally lightweight alternative to conventional data-intensive training paradigms." This aspect is crucial for developing AI that can learn in data-limited environments.

Looking ahead, the research team is keen to transcend the confines of controlled laboratory experiments and investigate the application of their findings in more naturalistic and dynamic real-world settings. "In the empirical world, our daily existence is characterized by decision-making and problem-solving within environments that are inherently complex, variable, and often laden with extraneous noise," Dr. Queiñáizer observed. "To more accurately emulate the nuances of human developmental learning, it is imperative that we account for these external environmental factors." This move towards greater ecological validity is a critical next step.

This forward-looking direction aligns with the team’s overarching ambition to deepen the scientific understanding of human learning at its fundamental neural level. "By investigating phenomena such as inner speech, and by elucidating the underlying neurobiological mechanisms that govern such processes, we are poised to acquire profound new insights into the intricacies of human biology and behavior," Dr. Queiñáizer concluded. "Furthermore, the knowledge gained from this research can be directly applied, for instance, in the development of sophisticated household or agricultural robots that are capable of operating autonomously and effectively within our complex and ever-changing global landscape." The ultimate goal is to create AI that can truly integrate and function within the complexities of human environments.