The intricate tapestry of human communication, encompassing thousands of distinct languages spoken globally, presents a fascinating paradox when viewed through the lens of information theory. While it seems logically plausible that our innate drive for efficiency would favor a highly compressed, digital-like encoding of meaning – akin to the binary systems computers employ – our linguistic systems exhibit a remarkable complexity and apparent redundancy. This divergence from pure informational optimization has long been a subject of scholarly inquiry, prompting researchers to explore the underlying mechanisms that shape the way we use language. A recent investigation, spearheaded by linguist Michael Hahn of Saarbrücken and Richard Futrell from the University of California, Irvine, offers a compelling model that illuminates this linguistic puzzle, with their findings disseminated in the esteemed journal Nature Human Behaviour.

At its core, the purpose of language, whether it’s the most widely spoken tongue or a language on the brink of extinction, remains singular: to convey meaning. This is achieved through a hierarchical structure, where individual words, each possessing semantic value, are meticulously assembled into phrases, which in turn form sentences. The resultant message is a product of these constituent parts, creating a coherent and understandable whole. Given that natural systems, including biological ones, typically gravitate towards maximizing efficiency and minimizing resource expenditure, the question naturally arises: why does the human brain encode linguistic information in a manner that appears so convoluted, rather than opting for a streamlined, digital approach? The theoretical advantage of a binary system, a sequence of ones and zeros, lies in its capacity for extreme data compression, allowing for the transmission of vast amounts of information in a compact format. However, the human preference for a less compressed, yet highly structured, form of communication suggests a deeper set of principles at play.

The researchers propose that the fundamental architecture of human language is inextricably bound to the fabric of our lived reality and sensory experiences. Hahn elaborates that language is not an arbitrary construct but is deeply rooted in our interactions with the tangible world. He illustrates this by posing a hypothetical scenario: the abstract term "gol" to describe "half a cat paired with half a dog." Such a concept would remain utterly opaque to any listener, as it does not correspond to any perceivable entity or common experience. Similarly, a mere concatenation of letters from familiar words, such as blending "cat" and "dog" into "gadcot," while technically containing elements of both, would render the meaning entirely inaccessible. In stark contrast, the phrase "cat and dog" is immediately understood because both entities are familiar concepts, deeply ingrained in our collective consciousness. This direct connection to shared knowledge and embodied experience is the bedrock upon which the intelligibility of human language is built.

The cognitive architecture of the human brain, it appears, favors pathways that leverage existing knowledge structures. Hahn posits that what might seem like a more circuitous route in linguistic expression is, in fact, less cognitively demanding for our brains. While a purely digital code could, in principle, facilitate faster information transfer, it would be inherently divorced from the rich contextual understanding derived from our everyday lives. Hahn employs an analogy of commuting to work: a familiar route is navigated almost on autopilot, requiring minimal conscious effort because the brain anticipates each turn and landmark. Conversely, a shorter, yet unfamiliar, detour necessitates heightened attention and cognitive processing, proving to be more taxing. From a mathematical perspective, this translates to a significantly smaller computational load on the brain when processing information conveyed through familiar, natural linguistic patterns.

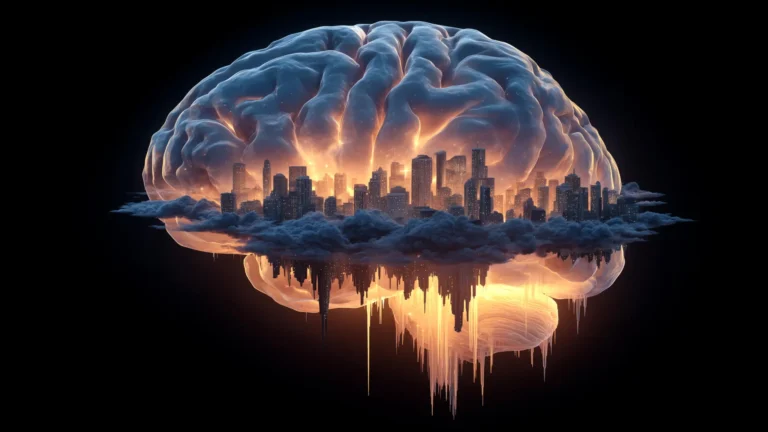

In essence, the imposition of a binary communication system would demand a substantial increase in mental exertion for both the speaker and the listener. Instead, our brains operate on a principle of predictive processing, constantly estimating the probability of subsequent words and phrases appearing. Over years of daily use, these linguistic patterns become deeply ingrained, facilitating smoother and less effortful communication. This predictive mechanism is a cornerstone of how we process and generate language.

Hahn provides a lucid example using the German language. The phrase "Die fünf grünen Autos" ("The five green cars") would be readily comprehensible to another German speaker. The initial article "Die" (The) immediately narrows down grammatical possibilities, signaling that the following noun will likely be plural or feminine singular. The numeral "fünf" (five) introduces the concept of quantity, ruling out abstract notions. "Grünen" (green) further refines the possibilities, indicating a plural noun that is green in color, potentially suggesting cars, bananas, or frogs. The final word, "Autos" (cars), resolves any ambiguity, solidifying the meaning. With each successive word, the brain progressively reduces uncertainty, converging on a singular interpretation.

In contrast, an unconventional ordering like "Grünen fünf die Autos" ("Green five the cars") disrupts this predictable flow of information. The expected grammatical cues appear out of sequence, preventing the brain from efficiently constructing meaning. This breakdown in predictable patterns highlights the cognitive burden imposed by non-standard linguistic structures.

The mathematical modeling undertaken by Hahn and Futrell provides empirical support for these observations, demonstrating that human language prioritizes the minimization of cognitive load over the maximization of data compression. These insights carry significant implications beyond the academic realm, extending to the development of advanced artificial intelligence systems. Specifically, the understanding gained from how the human brain navigates linguistic complexity could serve as a blueprint for enhancing large language models (LLMs), the sophisticated algorithms powering generative AI tools. By designing AI that more closely mimics the natural patterns of human communication, researchers can potentially create more intuitive and effective language processing systems, bridging the gap between artificial intelligence and human cognition.